Global technology company Google has introduced Nano Banana 2, its latest image generation model built on Gemini 3.1 Flash Image, as the firm moves to strengthen its position in the rapidly evolving multimodal artificial intelligence market.

The new model blends the speed advantages of its Flash architecture with the reasoning and visual quality capabilities previously associated with premium-tier models, expanding access to advanced AI imaging tools across Google’s ecosystem including the Gemini app, Search, Ads, developer platforms and enterprise cloud services.

The rollout reflects a broader industry push among large technology firms to deliver faster, more capable generative AI tools while embedding them directly into everyday productivity and creative workflows.

Faster multimodal capabilities

Nano Banana 2 represents the successor to Google’s earlier Nano Banana image model and Nano Banana Pro, both of which gained traction among creators and developers for their editing capabilities and photorealistic rendering.

According to the company, the latest release focuses on enabling rapid iteration cycles, allowing users to generate, modify and refine images at high speed while maintaining fidelity and contextual accuracy.

Central to this capability is the integration of Gemini Flash’s inference architecture with image generation functions. The design allows the model to interpret complex instructions and produce visual outputs quickly, narrowing the traditional trade-off between quality and processing time that has historically constrained generative image systems.

Google said the model also leverages real-world knowledge drawn from Gemini’s broader intelligence stack, enabling it to generate more accurate depictions of people, locations, products and concepts. The company indicated that this capability supports use cases such as infographic creation, diagram generation and data visualization, which increasingly sit at the intersection of text and image AI.

Precision text and localization features

A key enhancement in Nano Banana 2 is improved text rendering within images, an area that has posed technical challenges across generative AI models.

The system is designed to produce legible text elements for applications including marketing mock-ups, social media creatives, greeting cards and product visuals. Additionally, translation functionality embedded within the model enables users to localize textual elements directly inside generated images, supporting global content distribution.

For businesses operating across multiple markets, these capabilities could reduce design turnaround times and simplify multilingual campaign development.

Expanded creative control

Google said Nano Banana 2 introduces several workflow-level improvements aimed at creators and professional users.

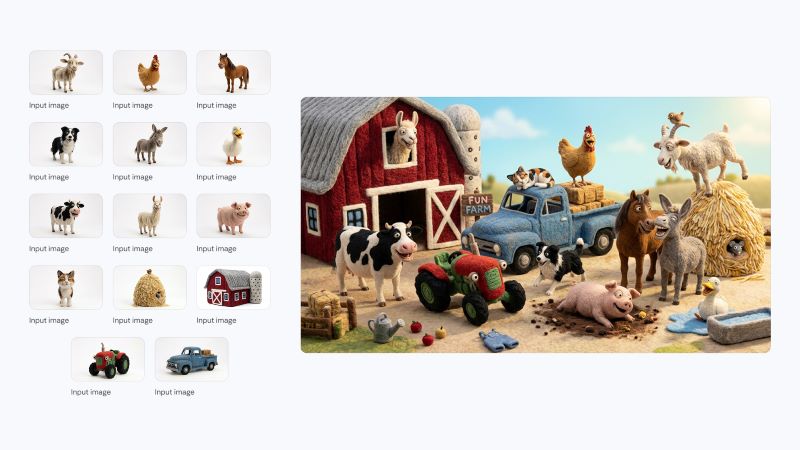

Among these is subject consistency functionality, which allows users to maintain the visual identity of multiple characters or objects across a sequence of generated images. This capability is expected to support storyboarding, brand campaigns and multimedia production where continuity is critical.

The model can also preserve the fidelity of numerous objects within a single generation workflow, a feature designed to enhance scene complexity without degrading image quality.

Instruction adherence has similarly been strengthened, with Google highlighting improved responsiveness to detailed prompts that specify style, lighting, composition and contextual elements.

From a production standpoint, the model supports a wide range of aspect ratios and resolutions, from lower-resolution formats to 4K outputs, enabling deployment across social platforms, websites, presentations and large-format displays.

The company also noted improvements in lighting realism, texture richness and overall image sharpness, positioning Nano Banana 2 as capable of delivering studio-grade visuals at Flash processing speeds.

Integration across Google ecosystem

Nano Banana 2 is being deployed across multiple Google services, signalling the company’s strategy of embedding generative AI into both consumer and enterprise environments.

Within the Gemini app, the model will become the default image generation engine across various usage modes, while users requiring specialized high-fidelity workflows will retain access to Nano Banana Pro through regeneration options.

Search integration is also underway, with Nano Banana 2 powering visual generation features within AI Mode and Lens experiences across mobile and desktop interfaces.

For developers, the model is available in preview through AI Studio and the Gemini API, enabling application builders to integrate image generation directly into software products.

Enterprise customers will gain access through preview availability on Google Cloud via Gemini API integration in Vertex AI, expanding opportunities for corporate deployment of multimodal AI capabilities in marketing, design automation and content production.

The model also becomes the default image generator within Flow and will support creative suggestion workflows inside Google Ads, highlighting its role in commercial campaign development.

Market and competitive implications

The launch of Nano Banana 2 comes amid intensifying competition in the generative AI sector, where leading technology firms are racing to deliver multimodal systems capable of producing text, images, video and audio within unified environments.

By positioning Nano Banana 2 as both a consumer-accessible and enterprise-ready tool, Google appears to be pursuing a platform strategy that encourages ecosystem lock-in while lowering barriers to experimentation.

For advertisers and creative agencies, faster iteration cycles and integrated localization tools could translate into shorter production timelines and lower operational costs. Meanwhile, developers may benefit from embedding visual generation into applications without relying on external design resources.

In emerging digital markets such as Kenya, where creative entrepreneurship and digital marketing are expanding rapidly, the availability of integrated image generation tools could accelerate content production for small businesses, online sellers and media startups.

Technology analysts note that the success of such models will depend not only on performance but also on trust and transparency mechanisms that address concerns around synthetic media authenticity.

Provenance and verification framework

Alongside the model release, Google highlighted its ongoing efforts to strengthen provenance and verification systems for AI-generated content.

The company is combining its SynthID watermarking technology with interoperable Content Credentials frameworks to provide contextual information about how images are generated and modified.

Usage metrics indicate growing adoption of verification tools within Gemini, with millions of checks conducted across multiple languages since launch. Google said additional verification functionality will be introduced within the Gemini app as part of the broader provenance initiative.

These developments reflect mounting global attention on AI transparency, particularly as generative tools become more capable of producing realistic synthetic media that can influence public discourse, marketing and information ecosystems.

Outlook

Nano Banana 2’s launch underscores Google’s continued investment in multimodal AI as a foundational layer across its consumer, developer and enterprise offerings.

By merging speed, reasoning and visual quality into a single model while distributing it widely across products, the company is advancing a strategy aimed at normalizing AI-assisted creativity within everyday digital workflows.

As adoption grows, the impact of such tools is likely to extend beyond creative industries into areas including education, e-commerce, journalism, software development and digital advertising, where visual content plays a central role in engagement and communication.